Graphchain is like joblib.Memory for dask graphs. Dask graph computations are cached to a local or remote location of your choice, specified by a PyFilesystem FS URL.

When you change your dask graph (by changing a computation's implementation or its inputs), graphchain will take care to only recompute the minimum number of computations necessary to fetch the result. This allows you to iterate quickly over your graph without spending time on recomputing previously computed keys.

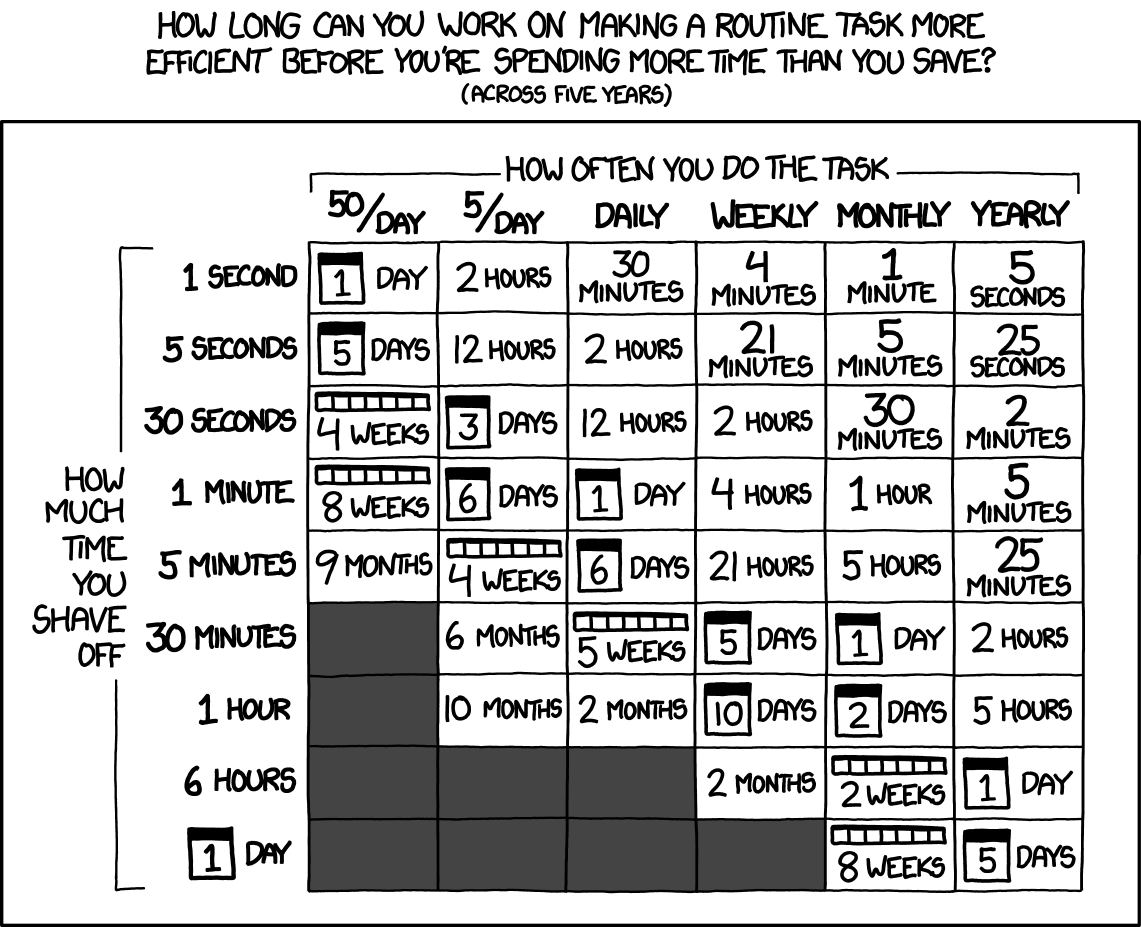

Source: xkcd.com/1205/

The main difference between graphchain and joblib.Memory is that in graphchain a computation's materialised inputs are not serialised and hashed (which can be very expensive when the inputs are large objects such as pandas DataFrames). Instead, a chain of hashes (hence the name graphchain) of the computation object and its dependencies (which are also computation objects) is used to identify the cache file.

Additionally, the result of a computation is only cached if it is estimated that loading that computation from cache will save time compared to simply computing the computation. The decision on whether to cache depends on the characteristics of the cache location, which are different when caching to the local filesystem compared to caching to S3 for example.

Install graphchain with pip to get started:

pip install graphchainTo demonstrate how graphchain can save you time, let's first create a simple dask graph that (1) creates a few pandas DataFrames, (2) runs a relatively heavy operation on these DataFrames, and (3) summarises the results.

import dask

import graphchain

import pandas as pd

def create_dataframe(num_rows, num_cols):

print("Creating DataFrame...")

return pd.DataFrame(data=[range(num_cols)]*num_rows)

def expensive_computation(df, num_quantiles):

print("Running expensive computation on DataFrame...")

return df.quantile(q=[i / num_quantiles for i in range(num_quantiles)])

def summarize_dataframes(*dfs):

print("Summing DataFrames...")

return sum(df.sum().sum() for df in dfs)

dsk = {

"df_a": (create_dataframe, 10_000, 1000),

"df_b": (create_dataframe, 10_000, 1000),

"df_c": (expensive_computation, "df_a", 2048),

"df_d": (expensive_computation, "df_b", 2048),

"result": (summarize_dataframes, "df_c", "df_d")

}Using dask.get to fetch the "result" key takes about 6 seconds:

>>> %time dask.get(dsk, "result")

Creating DataFrame...

Running expensive computation on DataFrame...

Creating DataFrame...

Running expensive computation on DataFrame...

Summing DataFrames...

CPU times: user 7.39 s, sys: 686 ms, total: 8.08 s

Wall time: 6.19 sOn the other hand, using graphchain.get for the first time to fetch 'result' takes only 4 seconds:

>>> %time graphchain.get(dsk, "result")

Creating DataFrame...

Running expensive computation on DataFrame...

Summing DataFrames...

CPU times: user 4.7 s, sys: 519 ms, total: 5.22 s

Wall time: 4.04 sThe reason graphchain.get is faster than dask.get is because it can load df_b and df_d from cache after df_a and df_c have been computed and cached. Note that graphchain will only cache the result of a computation if loading that computation from cache is estimated to be faster than simply running the computation.

Running graphchain.get a second time to fetch "result" will be almost instant since this time the result itself is also available from cache:

>>> %time graphchain.get(dsk, "result")

CPU times: user 4.79 ms, sys: 1.79 ms, total: 6.58 ms

Wall time: 5.34 msNow let's say we want to change how the result is summarised from a sum to an average:

def summarize_dataframes(*dfs):

print("Averaging DataFrames...")

return sum(df.mean().mean() for df in dfs) / len(dfs)If we then ask graphchain to fetch "result", it will detect that only summarize_dataframes has changed and therefore only recompute this function with inputs loaded from cache:

>>> %time graphchain.get(dsk, "result")

Averaging DataFrames...

CPU times: user 123 ms, sys: 37.2 ms, total: 160 ms

Wall time: 86.6 msGraphchain's cache is by default ./__graphchain_cache__, but you can ask graphchain to use a cache at any PyFilesystem FS URL such as s3://mybucket/__graphchain_cache__:

graphchain.get(dsk, "result", location="s3://mybucket/__graphchain_cache__")In some cases you may not want a key to be cached. To avoid writing certain keys to the graphchain cache, you can use the skip_keys argument:

graphchain.get(dsk, "result", skip_keys=["result"])Alternatively, you can use graphchain together with dask.delayed for easier dask graph creation:

import dask

import pandas as pd

@dask.delayed

def create_dataframe(num_rows, num_cols):

print("Creating DataFrame...")

return pd.DataFrame(data=[range(num_cols)]*num_rows)

@dask.delayed

def expensive_computation(df, num_quantiles):

print("Running expensive computation on DataFrame...")

return df.quantile(q=[i / num_quantiles for i in range(num_quantiles)])

@dask.delayed

def summarize_dataframes(*dfs):

print("Summing DataFrames...")

return sum(df.sum().sum() for df in dfs)

df_a = create_dataframe(num_rows=10_000, num_cols=1000)

df_b = create_dataframe(num_rows=10_000, num_cols=1000)

df_c = expensive_computation(df_a, num_quantiles=2048)

df_d = expensive_computation(df_b, num_quantiles=2048)

result = summarize_dataframes(df_c, df_d)After which you can compute result by setting the delayed_optimize method to graphchain.optimize:

import graphchain

from functools import partial

optimize_s3 = partial(graphchain.optimize, location="s3://mybucket/__graphchain_cache__/")

with dask.config.set(scheduler="sync", delayed_optimize=optimize_s3):

print(result.compute())By default graphchain will cache dask computations with joblib.dump and LZ4 compression. However, you may also supply a custom serialize and deserialize function that writes and reads computations to and from a PyFilesystem filesystem, respectively. For example, the following snippet shows how to serialize dask DataFrames with dask.dataframe.to_parquet, while other objects are serialized with joblib:

import dask.dataframe

import graphchain

import fs.osfs

import joblib

import os

from functools import partial

from typing import Any

def custom_serialize(obj: Any, fs: fs.osfs.OSFS, key: str) -> None:

"""Serialize dask DataFrames with to_parquet, and other objects with joblib.dump."""

if isinstance(obj, dask.dataframe.DataFrame):

obj.to_parquet(os.path.join(fs.root_path, "parquet", key))

else:

with fs.open(f"{key}.joblib", "wb") as fid:

joblib.dump(obj, fid)

def custom_deserialize(fs: fs.osfs.OSFS, key: str) -> Any:

"""Deserialize dask DataFrames with read_parquet, and other objects with joblib.load."""

if fs.exists(f"{key}.joblib"):

with fs.open(f"{key}.joblib", "rb") as fid:

return joblib.load(fid)

else:

return dask.dataframe.read_parquet(os.path.join(fs.root_path, "parquet", key))

optimize_parquet = partial(

graphchain.optimize,

location="./__graphchain_cache__/custom/",

serialize=custom_serialize,

deserialize=custom_deserialize

)

with dask.config.set(scheduler="sync", delayed_optimize=optimize_parquet):

print(result.compute())Setup: once per device

- Generate an SSH key and add the SSH key to your GitHub account.

- Configure SSH to automatically load your SSH keys:

cat << EOF >> ~/.ssh/config Host * AddKeysToAgent yes IgnoreUnknown UseKeychain UseKeychain yes EOF

- Install Docker Desktop.

- Enable Use Docker Compose V2 in Docker Desktop's preferences window.

- Linux only:

- Configure Docker and Docker Compose to use the BuildKit build system. On macOS and Windows, BuildKit is enabled by default in Docker Desktop.

- Export your user's user id and group id so that files created in the Dev Container are owned by your user:

cat << EOF >> ~/.bashrc export UID=$(id --user) export GID=$(id --group) EOF

- Install VS Code and VS Code's Remote-Containers extension. Alternatively, install PyCharm.

- Optional: Install a Nerd Font such as FiraCode Nerd Font with

brew tap homebrew/cask-fonts && brew install --cask font-fira-code-nerd-fontand configure VS Code or configure PyCharm to use'FiraCode Nerd Font'.

- Optional: Install a Nerd Font such as FiraCode Nerd Font with

Setup: once per project

- Clone this repository.

- Start a Dev Container in your preferred development environment:

- VS Code: open the cloned repository and run Ctrl/⌘ + ⇧ + P → Remote-Containers: Reopen in Container.

- PyCharm: open the cloned repository and configure Docker Compose as a remote interpreter.

- Terminal: open the cloned repository and run

docker compose run --rm devto start an interactive Dev Container.

Developing

- This project follows the Conventional Commits standard to automate Semantic Versioning and Keep A Changelog with Commitizen.

- Run

poefrom within the development environment to print a list of Poe the Poet tasks available to run on this project. - Run

poetry add {package}from within the development environment to install a run time dependency and add it topyproject.tomlandpoetry.lock. - Run

poetry remove {package}from within the development environment to uninstall a run time dependency and remove it frompyproject.tomlandpoetry.lock. - Run

poetry updatefrom within the development environment to upgrade all dependencies to the latest versions allowed bypyproject.toml. - Run

cz bumpto bump the package's version, update theCHANGELOG.md, and create a git tag.

Radix is a Belgium-based Machine Learning company.

Our vision is to make technology work for and with us. We believe that if technology is used in a creative way, jobs become more fulfilling, people become the best version of themselves, and companies grow.