This project is an extension of my course project in ES 202: Estimation and Control of Dynamical System. I really appreciate the collaboration of my teammates: En-Yu Yang and Feicheng Wang.

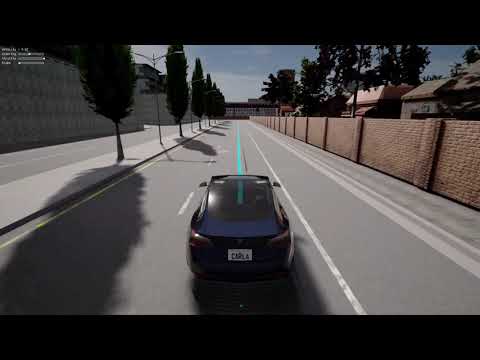

The majority of self-driving implementations in Carla are model-free RL. To achieve higher sample efficiency, a model-based algorithm will be preferred, eg. iLQR. However, Carla doesn't provide a set of equations for the dynamical model, so the first step here is to collect data and fit a differentiable model. The next step is to incorporate the model into iLQR framework. Before running iLQR, we're required to define the cost function, eg. distance to the reference path, and constraints. Luckily, Carla has an API for planning routes represented by waypoints, and the range of control input is written in Carla document. The final MPC-iLQR algorithm will be run at 10Hz.

To simplify the problem, the model data were collected manually through pressing WASD in pygame interface. Check files: model_data_collection_preparation.py and model_data_collection_main.py. The crux is to cover a wider range of states, as uniform as possible, and disturb the motion occasionally.

One pitfall here is to use end-to-end model, i.e. fully connected neural network with no prior of the dynamics. There will be no guarantee of the long-term stability and symmetry. Instead, my final version is based on the bicycle model.

(Image credit to Robust Model Predictive Control for Autonomous Vehicles/Self Driving Cars)

However, we couldn't directly apply the kinematic bicycle model. The relationship between acceleration and throttle, and the one between and

, are unknown. Besides, the length parameters,

and

, remain to be identified. Therefore, part of the dynamical model is a black box, and could be represented by a nerual network (NN).

My final model has the following form:

, where and

are the two outputs of a NN.

There're several tricks to increase the accuracy of the dynamical model.

- The first is the invariant of model to position

and rotation

, which has been shown in the above equation.

- The calculation of

could be problematic. Directly using formula

beta = np.arctan2(vy, vx) - yawwill leadto be incorrect, when both

and yaw are respectively closed to

and

, or

and

. One trick is to remap the problematic

to be closed to 0, and the

needs to be smoothed.

- Before been fed into NN,

will be prepossessed as

and

.

- To enforce the symmetry of

and steering, each input

will have a symmetric twin

. The two outputs are

and

. Reflecting the angular variables will only flip the sign of

. Therefore,

, and

. Without this trick, the model couldn't predict the vehicle to moving along a stright line.

- Positivity of v is guaranteed through square root method. The requirement can be written as when

,

. Since

, we could define the 1st dim of NN output to be

, and the

will satisfy the requirement for positive v. Without this trick, the model might falsely predict that the vehicle could move backwardly, while the reverse mode hasn't been considered.

- Use smooth activation function, eg.

tanh, although the Mean Square Error might be higher. Using nonsmooth activation function, eg.LeakyReluwill cause the iLQR planned input sequence unnaturally nonsmooth.

Here are two scenarios to be tested. One is pure rotation, with steering=throttle=1 and brake=0, and the other is pure rushing, with only throttle=1. As we can see from the following figure, the predictions are pretty closed to the real data. The result can be further improved by shortening the time interval from 0.1s to 0.01s, and replacing the fully connected NN with a LSTM cell.

The iLQR algorithm is strongly dependent on the speed of rolling out the dynamics and calculating Jacobian and Hessian Matrix. I compared three libraries here: Pytorch, Pydrake, Jax. Pydrake is the python binding of Drake written in C++. It has similar simulation function as simulink, but has more support for diffrerentiation and optimization. Jax is Numpy with high performance automatic differentiation. Under the hood, Jax leverages just-in-time XLA compilation and optimization.

-

vehicle continuous dynamics w/o NN

Inference Jacobian Pytorch 36.4 µs ± 1.21 µs 1.19 ms ± 64.7 µs Pydrake 39.4 µs ± 2.12 µs 27.5 µs ± 1.02 µs Jax 81.5 µs ± 2.26 µs 79.4 µs ± 908 ns -

NN with 3 fully connected layers

Inference Jacobian Pytorch 102 µs ± 3.99 µs 460 µs ± 8.71 µs Pydrake 7.08 ms ± 318 µs 10.9 µs ± 452 ns Jax 73.2 µs ± 1.92 µs 74.8 µs ± 4.95 µs -

vehicle continuous dynamics w/ NN

Inference Jacobian Pytorch 241 µs ± 11.3 µs 1.21 ms ± 45 µs Jax 74.1 µs ± 2.33 µs 71.3 µs ± 1.55 µs

Test Platform: i7-7700HQ

By comparison, it's clear to see Pydrake performs best at calculating Jacobian, but is not that compatible with NN inference. Pytorch is slow to calculate Jacobian when model is only partially described by NN. For Jax, all the operations has the similar time consumption. That could come from the Python-XLA overheads, and later iLQR-MPC testing shows that increasing the iteration number to several times larger will not affect the overall speed.

Pytorch can also be accelerated through JIT, but here I chose Jax for simplicity.

iLQR is extremely fast and memory efficient because it breaks the large optimization problem into small ones, and the later can be solved iteratively. However, one challenge for iLQR is that constraints are harder to be imposed.

Before considering the obstacles, the input constraint is much easier to be written down and much more crucial for the iLQR-MPC test. In Control-Limited Differential Dynamic Programming (Tassa 2014), three methods are introduced: Naive Clamping, Squashing Functions, and Box-DDP. It's claimed that

Clamping does not produce satisfying results since the clamped directions are not taken into account during the inversion of Quu. On the other hand, squashing introduces an artificial non-linearity in the saturated directions in a way that prevents good performance.

Their data reveal that as the interation number increases, the total cost of Box-DDP decreases fastest, and the naive clamping is the slowest.

For simplicity, I still chose to use Squashing Function Method. My choice for squash function is sine, rather than the sigmoid mentioned in the paper. The reason is to reduce the non-linearity, and the control input could easily reach its bound.

My design for cost function is that

A tested task is to navigate from the origin to point (20,20) and stop in 10s (100 time steps). As is shown in the following figures, the total cost quickly converges and the navigation is pretty smooth.

|

|

|

|

In practice, the planning and control are separated. Planning provides a set of waypoints that the vehicle should follow. One naive solution is to choose a front waypoint as the chasing target, and the problem reduces to pure pursuit. However, Constrained Iterative LQR for On-Road Autonomous Driving Motion Planning (Chen 2017) points out a problematic Scenario that the vehicle might deviate from the route at a sharp turn.

Image credit to Constrained Iterative LQR for On-Road

Autonomous Driving Motion Planning

Hence, to have a more refined trajectory, the cost function has to be designed to penalize the deviation. However, the waypoints are discrete so naively search for the mininal distance from the vehicle to all the waypoints is not differentiable everywhere. The paper proposes that a polynomial curve can be fitted locally, and every time step, an minimization is solved.

My choice for distance function is the log-sum-exp of the distance from vehicle to all the waypoints, i.e. . It's a common trick in Machine Learning, fully differentiable, and doesn't need to solve the minimization at each time step of each iteration. LSE has the property that

The design for cost function is that

, where the target_ratio is FUTURE_WAYPOINTS_AS_STATE*dp/(6*np.pi), and the time_step_ratio is TIME_STEPS/50. "dp" is the distance between waypoints, "FUTURE_WAYPOINTS_AS_STATE" is how many future waypoints should be considered in the distance function. These ratios are set to normalize the cost function, and thus the program is compatible to the different hyperparameters.

Run the route following iLQR program iteratively at 10Hz is the MPC in the video demonstrated in the first section. However, the running frequency could be higher than 1000Hz, since the average time per run is about 500-700us, when time step for prediction = 60, iteration per run = 300. Besides, the performance almost has no change when the iteration per run increases from 50 to 300.

Test Platform: i7-7700HQ

- Automatic data collection for system identification, with uncertainty considered

- Collect data directly from IMU, rather than calling Carla API

- Model considers the slope of the terrian, or dynamically provides terrian information

- Implement iLQG

- Implement Box-DDP

- Consider the constraints from obstacles

- Adaptively change the cost function